Increasing data center temperatures to reduce energy costs

Periodically we get questions about data center temperatures and energy savings. The questions usually ask about possible energy savings in commercial data centers by raising the temperature of the data hall. Some form of this question surfaces regularly over time because it just seems to be a common sense opportunity. But there is alot to consider before running out and adjusting your “thermostat”.

The most recent question took the form of “I have followed ASHRAE for some years and have increased temperature thresholds in rooms over which I have full control. If there are cost and efficiency gains by accepting higher temperatures, is there talk of marketing this — i.e., segregated/containerized hotter rooms — to colo customers who believe they can take the heat to save on monthly recurring charges? If so, I have not seen it yet.”.

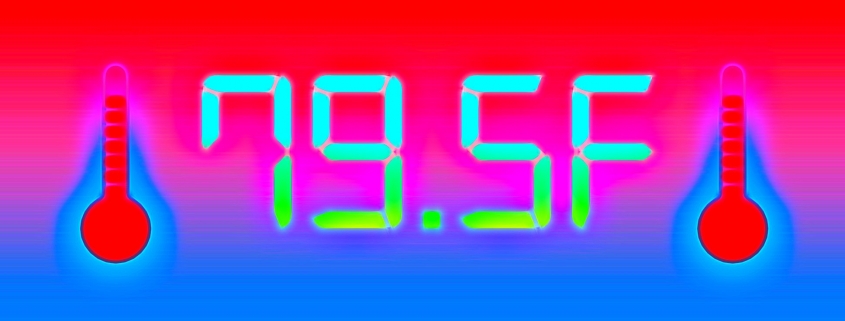

This really is a great question, because it means we are thinking about energy conservation and optimization. At first glance this seems like easy avenue to pursue. In fact many of the IT gear makers are already supporting higher inlet temperatures, some as high as 95-degrees F or more. Earlier this year, our CTO Chris Brown provided the short answer, “In general, there are cost savings and energy efficiency gains to be realized by increasing supply temperatures in data halls.” and then went on to add that, ‘BUT it is not as simple as it sounds, because alot of factors need to be considered before it’s a ‘slam-dunk'”.

So lets dive into these factors in some more detail:

- Increasing the inlet supply temperature often results in increased air flow requirements. Depending on the design of the facility, this may over tax the fans and significant increase their energy consumption if the system is tightly designed around a given air flow. Remember that a non-trivial amount of the energy spent for cooling is consumed by the fan motors themselves, so running fans faster WILL reduce any savings seen with higher temperatures. Using an Energystar report on power consumption of fans, they state that “a reduction of 10% in fan speed reduces that fan’s use of electricity by approximately 25%. A 20% speed reduction yields electrical savings of roughly 45%.” so for this discussion we’d be doing the reverse… Increasing the fan speed with the resulting increase in power draw.

- Manufacturers usually warranty their equipment when used within their specified operational conditions, including the range of temperatures at the inlet. And while some manufacturers may have increased those ranges to allow for warmer inlet temperatures, the data hall must be cooled to the LOWEST operating condition specified across all equipment installed in that hall. In other words, your thousand Dell servers may happily support inlets of 85 or 95-degrees, but your Cisco NEXUS sitting at the end of each row may require 80F or less. And the storage appliance may specifiy 78F. Each piece of gear has it’s own allowable environment to be warrantied. And there is no practicle way to separate the various types of gear across different ‘temperature zones’ in a commercial data center even if such zones were available, which they are not.

- Most enterprise grade IT gear also includes in-chassis fans, which vary in speed as the inlet temperature goes up. Again, raising the hall temperatures and the inlet temperatures will likely cause all of the in-chassis fans to run much faster, and draw significantly more current. (Which ironicly is great for the old macro PUE calculation, but horrible when considering actual energy usage for cooling). And as Chris pointed out in his response, there is a secondary concern when using in-chassis fans more heavily: “doing so can significantly raise the sizing requirements on UPS systems, because all of that new in-chassis fan power is now considered ‘IT load’ from a design standpoint.” A huge factor to be sure.

- The incentive may not exist. Most colocation providers write SLAs (service level agreements) specifying inlet temperatures UP to and including the ASHRAE “Recommended” 80-degrees. (Many still contractually guarantee an inlet of 74F). Chris advises that he has not run across any of those type of client agreements which offer different rates based on willingness to operate in other environmental conditions (i.e. ASHRAE A1 through A4 for instance). This is likely because providers with existing facilities do not have their data hall designed to allow for varying the supply temperature in such granular neighborhoods. Hot/Cold aisels may exist, but the inlet temperatures are not offered on a slide scale pricing schedule. And since not all tenants in any given hall will want an elevated inlet supply air temperature, those operators and their contracts do not provide any variants.

- Failure rates of IT gear have not been studied sufficiently at the A3 and above higher temperature ranges. Intel has been studing this question for years and regularly concludes that servers running up through the A2 range (95 degrees F) seem to have typical failure rates. They also state that more study is required to make the same statements around A3 and A4. And then you have the network, storage and security gear from hundrerds of manufacturers that also have the same requirements.

- And keep in mind that running a data center above ASHRAE’s “Recommended” levels might limit the number of hours the data center technicians could remain on the IT floor due to local regulatory, health and safety concerns. As cited by OSHA, working conditions between 68 F and 78 F are ideal for the workplace. A number of other health and safety journals (including Healthday) go into more detail about what would be expected if technicians are required to work hotter data centers. Their summary includes higher rates of accidents and more mis-judgments as the temperature increases above 73-degress, with significant concerns for employees working in the conditions identified by ASHRAE’s A3 and A4.

So can higher temperature halls be effective? Absolutely, if they were designed to be higher. If the mechanical design accounted for the hall to be much hotter (gasp: A3 or A4 ?) and if ALL of the IT gear that was to be installed in that hall was purchased and warrantied for that range, then absolutely this works. But few if any halls are built and/or operated that way today, because doing so would severely limit the choice of gear that could be included on the raised floor.

Directionally a great discussion… it’s just the retrofit of reality discussion that gets awkward…

2020

2020 2020

2020