Avoid Target Fixation in the Data Center

Removing heat is more effective than adding cooling

By Steve Press, with Pitt Turner, IV

According to Wikipedia, the term target fixation was used in World War II fighter-bomber pilot training to describe why pilots would sometimes fly into targets during a strafing or bombing run. Since then, others have adopted the term. The phenomenon is most commonly associated with scenarios in which the observer is in control of a high-speed vehicle or other mode of transportation. In such cases, the operators become so focused on an observed object that their awareness of hazards or obstacles diminishes.

Motorcyclists know about the danger of target fixation. According to both psychologists and riding experts, focusing so intently on a pothole or any other object may lead to bad choices. It is better, they say, to focus on your ultimate goal. In fact, one motorcycle website advises riders, “…once you are in trouble, use target fixation to save your skin. Don’t look at the oncoming truck/tree/pothole; figure out where you would rather be and fixate on that instead.” Data center operators can experience something like target fixation, when they find themselves continually adding cooling to raised floor spaces, without ever solving the underlying problem and they can arrive at better solutions by focusing on the ultimate goal rather than intermediate steps.

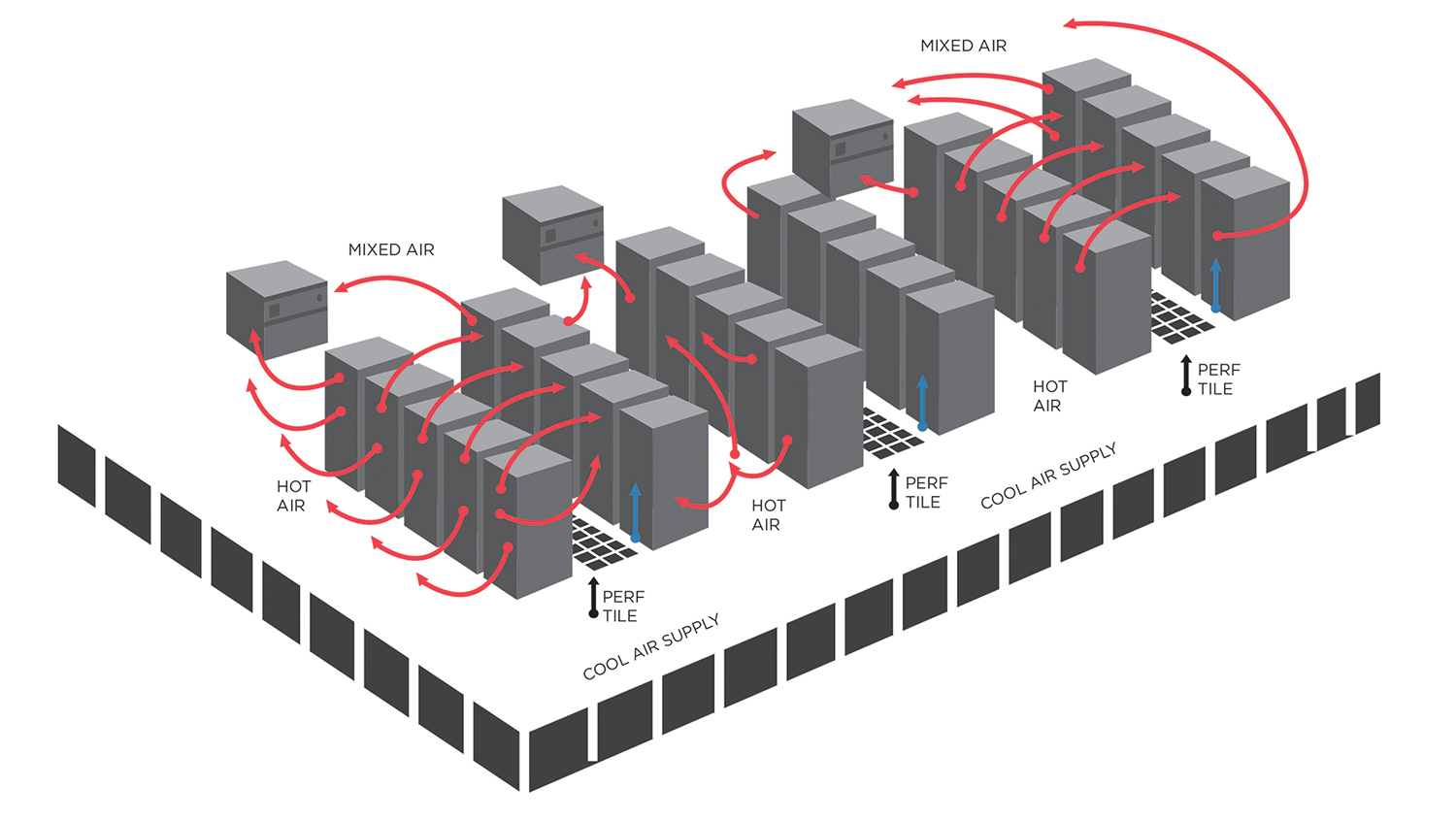

A Hot Aisle/Cold Aisle solution that focuses on pushing more cold air through the

raised floor is often defeated by bypass airflow

We have been in raised floor environments that had hot spots just a few feet away from very cold spaces. The industry usually tries to address this problem by adding yet more cooling and being creative about air distribution. While other factors are examined, we always have wind up adding more cooling capacity.

But, you know what? Data centers still have a cooling problem. In 2004, an industry study found that our computer rooms had nearly 2.6 times the cooling capacity warranted by IT loads. And while the industry has been focused on solutions to solve this cooling problem, a recent industry study reports that the ratio of cooling capacity to IT load is now 3.9.

Could it be that the data center industry has target fixation on cooling? What if we focused on removing heat rather than adding cooling?

Science and physics instructors teach that you can’t add anything to cool an object; to cool an object, you have to remove heat. Computers generate a watt of heat for every watt they use to operate. Unless you remove that heat, temperatures will increase, potentially damaging critical IT components. Focusing on removing the heat is a subtle but significantly different approach from adding cooling equipment.

Like other data center teams, the Kaiser Permanente Data Center Solutions team had been frustrated by cooling problems. For years, we had been trying to make sure we put enough cool air into the computer rooms. Common solutions addressed cubic feet per minute under the floor, static pressure through the perforated tiles, and moving cold air to the top of 42U computer racks. Although important, these tasks resulted from our fixation on augmenting cooling rather than removing heat. It occurred to us that our conceptual framework might be limiting our efforts, and the language that we used reinforced that framework.

We decided we would no longer think of the problem as a cooling problem. We decided we were removing heat, concluding that after removing all the heat generated by the computer equipment from a room, the only air left would be cool, unheated air. That realization was exciting. A simple change in wording opened up all kinds of possibilities. There are lots of ways to remove heat besides adding cool air.

Suddenly, we were no longer putting all our mental effort into dreaming up ways to distribute cold air to the data center’s computing equipment. Certainly, the room would still require enough perforated tiles and cool air supply openings for air with less heat to flow, but no longer would we focus on increasingly elaborate cool air distribution methods.

Computer equipment automatically pulls in surrounding air. If that surrounding air contains less heat, the equipment doesn’t have to work as hard to stay cool. The air with less heat would pick up heated air from the computer equipment and carry it on its way to our cooling units. Sounds simple, right?

In this particular case, we were just thinking of air as the working medium to move heat from one location to another; we would use it to blow the hot air around, so to speak.

Another way of moving heat is to use cool outdoor air to push heated air off the floor, eliminating the need to create your own cool air. And, there are more traditional approaches, where air passes through a heat transfer system, transferring its heat into another medium such as water in a refrigeration system. All of these methods eject heat from the space.

We didn’t know if it would work at first. We understood the overall computer room cooling cycle: the computer room air handling (CRAH) units cool air, the cool air gets distributed to computer rooms and blows hot air off the equipment and back to the CRAH units, and the CRAH units’ cooling coils transfer the heat to the chiller plant.

But we’d shifted our focus to removing heat rather than cooling air; we’d decided to look at the problem differently. Armed with a myriad of the new wireless temperature sensor technology available from various manufactures, we were able to capture temperature data throughout our computer rooms for a very small investment. This allowed us to actually visualize the changes we were making and trend performance data.

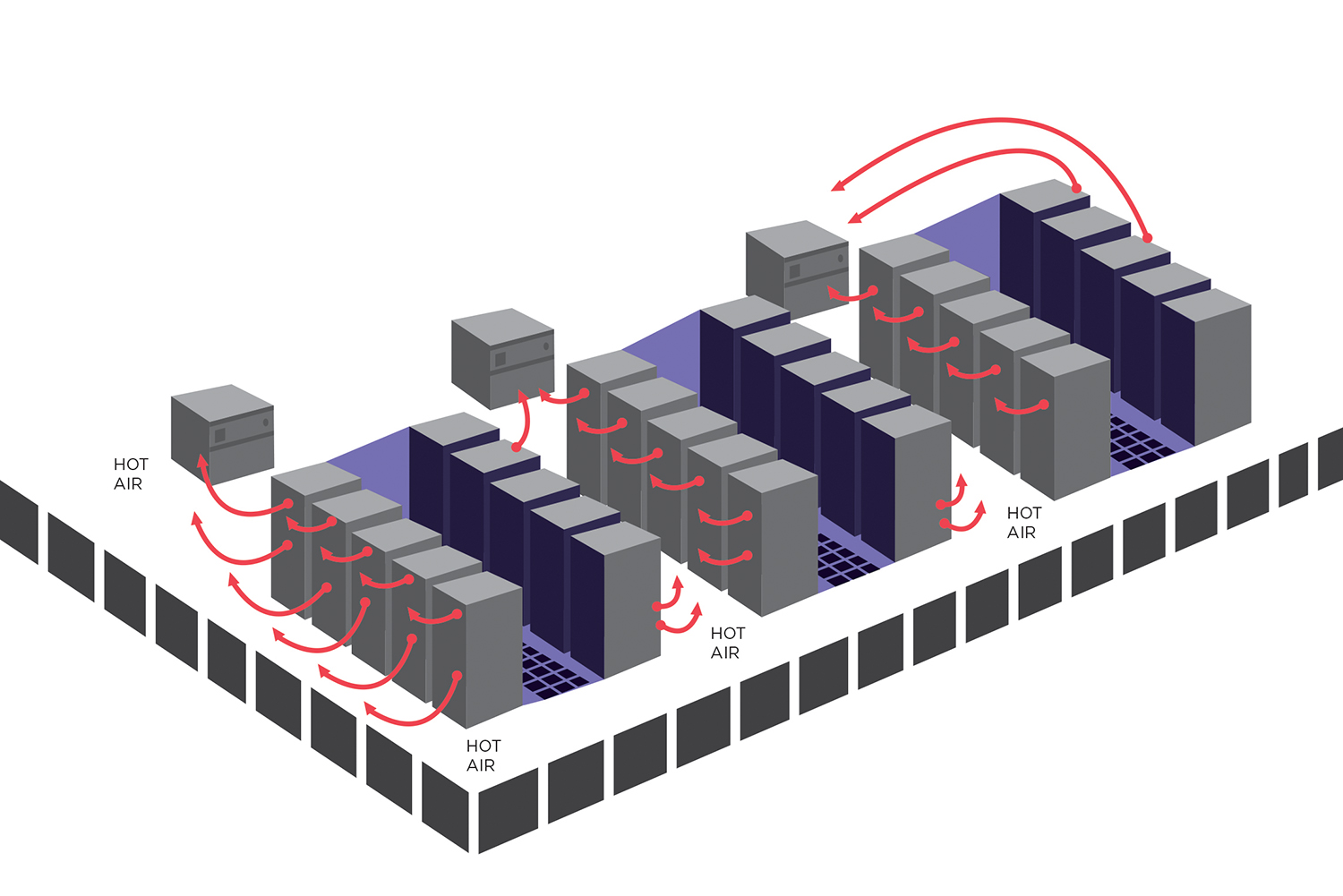

Our team became obsessed with getting heat out of the equipment racks and back to the cooling coils so it could be taken out of the building, using the appropriate technology at each site (e.g., air-side economizers, water-side economizers, mechanical refrigeration). We improved our use of blanking plates and sealed penetrations under racks and at the edges of the rooms. We looked at containment methods: Cold Aisle containment first, then Hot Aisle containment, and even ducted cabinet returns. All these efforts focused on moving heat away from computer equipment and back to our heating, ventilation, and air conditioning equipment. Making sure cool air was available became a lower priority.

To our surprise, we became so successful at removing heat from our computer rooms that the cold spots got even colder. As a result, we reduced our use of perforated tiles and turned off some cooling units, which is where the real energy savings came in. We not only turned off cooling units, but also increased the load on each unit, further improving its efficiency. The overall efficiency of the room started to compound quickly.

We started to see dramatic increases in efficiencies. These showed up in the power usage effectiveness (PUE) numbers. While we recognized that PUE only measures facility overhead and not the overall efficiency of the IT processes, reducing facility overhead was the goal, so PUE was one way to measure success.

To measure the success in a more granular way, we developed the Computer Room Functional Efficiency (CRFE) metric, which won an Uptime Institute GEIT award in 2011 http://bit.ly/1oxnTY8). We used this metric to demonstrate the effects of our new methods on our environment, and prove that we had seen significant improvement.

This air divider is home made unit to separate Hot and Cold Aisles and prevent bypass air. Air is stupid; you have to force it to do what you want.

In the first Kaiser Permanente data center in which we implemented what have become our best practices, we started out with 90 CRAH units in a 65,000-square-foot (ft2) raised floor space. The total UPS load was 3,595 kilowatts (kW), and the CCF was 2.92. After piloting our new methods, we were able to shut down 41 CRAH units, which helped reduce CCF to 1.7, and realize a sustained energy reduction of just under 10% or 400 kW. Facility operators can easily project savings to their own environments, using 400 kW and 8,760 hours per year, times their own electrical utility rate. At $0.10/kilowatt-hour, this would be $350,000 annually.

In addition, our efficiency increased from 67% to 87%, as measured using Kaiser Permanente’s CRFE methodology, and we were still able to maintain stable air supplies to the computer equipment within ASHRAE recommended ranges. The payback on the project to make the airflow management improvements and reduce the number of CRAH units running in the first computer room was just under 6 months. Kaiser Permanente also received a nice energy incentive rebate from the local utility, which was slightly less than 25% of the material implementation cost for the project.

As an added benefit, we also reduced maintenance costs. The raised floor environment is much easier to maintain because of the reduced heat in the environment. When making changes or additions, we just have to worry about removing the heat for the change and making sure an additional air supply is available; the cooling part takes care of itself. There is also a lot less equipment running on the raised floor that needs to be maintained, and we have increased redundancy.

Kaiser Permanente has now rolled this thinking and training out across its entire portfolio of six national data centers and is realizing tremendous benefits. We’re still obsessed with heat removal. We’re still creating new best practices in airflow management, and we’re still taking care of those cold spots. A simple change in how we thought about computer room air has paid dividends. We’re focused on what we want to achieve, not what we’re trying to avoid. We found a path around the pothole.

Steve Press has been a data center professional for more than 30 years and is the leader of Kaiser Permanente’s (KP) National Data Center Solutions group, responsible for hyper-critical facility operations, data center engineering, strategic direction, and IT environmental sustainability, delivering real-time health care to more then 8.7 million health-care members. Mr. Press came to KP after working in Bank of America’s international critical facilities team for more than 20 years. He is a Certified Energy Manager (CEM), and an Accredited Tier Specialist (ATS). Mr. Press has a tremendous passion for greening KP’s data centers and IT environments. This has led to several significant recognitions for KP, including Uptime Institute’s GEIT award for Facilities Innovation in 2011.

Steve Press has been a data center professional for more than 30 years and is the leader of Kaiser Permanente’s (KP) National Data Center Solutions group, responsible for hyper-critical facility operations, data center engineering, strategic direction, and IT environmental sustainability, delivering real-time health care to more then 8.7 million health-care members. Mr. Press came to KP after working in Bank of America’s international critical facilities team for more than 20 years. He is a Certified Energy Manager (CEM), and an Accredited Tier Specialist (ATS). Mr. Press has a tremendous passion for greening KP’s data centers and IT environments. This has led to several significant recognitions for KP, including Uptime Institute’s GEIT award for Facilities Innovation in 2011.

Pitt Turner IV, PE, is the executive director emeritus of Uptime Institute, LLC. Since 1993 he has been a senior consultant and principal for Uptime Institute Professional Services (UIPS), previously known as ComputerSite Engineering, Inc.

Pitt Turner IV, PE, is the executive director emeritus of Uptime Institute, LLC. Since 1993 he has been a senior consultant and principal for Uptime Institute Professional Services (UIPS), previously known as ComputerSite Engineering, Inc.

Before joining the Institute, Mr.Turner was with Pacific Bell, now AT&T, for more than 20 years in their corporate real estate group, where he held a wide variety of leadership positions in real estate property management, major construction projects, capital project justification and capital budget management. He has a BS in Mechanical Engineering from UC Davis and an MBA with honors in Management from Golden Gate University in San Francisco. He is a registered professional engineer in several states. He travels extensively and is a school-trained chef.

UI @ 2021

UI @ 2021