Ignore Data Center Water Consumption at Your Own Peril

Will drought dry up the digital economy? With water scarcity a pressing concern, data center owners are re-examining water consumption for cooling.

By Ryan Orr and Keith Klesner

In the midst of a historic drought in the western U.S., 70% of California experienced “extreme” drought in 2015, according to the U.S. Drought Monitor.

The state’s governor issued an Executive Order requiring a 25% reduction in urban water usage compared to 2013. The Executive Order also authorizes the state’s Water Resources Control Board to implement restrictions on individual users to meet overall water savings objectives. Data centers, in large part, do not appear to have been impacted by new restrictions. However, there is no telling what steps may be deemed necessary as the state continues to push for savings.

The water shortage is putting a premium on the existing resources. In 2015, water costs in the state increased dramatically, with some customers seeing rate increases as high as 28%. California is home to many data centers, and strict limitations on industrial use would dramatically increase the cost of operating a data center in the state.

The problem in California is severe and extends beyond the state’s borders.

Population growth and climate change will create additional global water demand, so the problem of water scarcity is not going away and will not be limited to the California or even the western U.S.; it is a global issue.

On June 24, 2015, The Wall Street Journal published an article focusing on data center water usage, “Data Centers and Hidden Water Use.” With the industry still dealing with environmental scrutiny over carbon emissions, and water scarcity poised to be the next major resource to be publicly examined, IT organizations need to have a better understanding of how data centers consume water, the design choices that can limit water use, and the IT industry’s ability to address this issue.

HOW DATA CENTERS USE WATER

Data centers generally use water to aid heat rejection (i.e., cooling IT equipment). Many data centers use a water-cooled chilled water system, which distributes cool water to computer room cooling units. A fan blows across the chilled water coil, providing cool, conditioned air to IT equipment. That water then flows back to the chiller and is recooled.

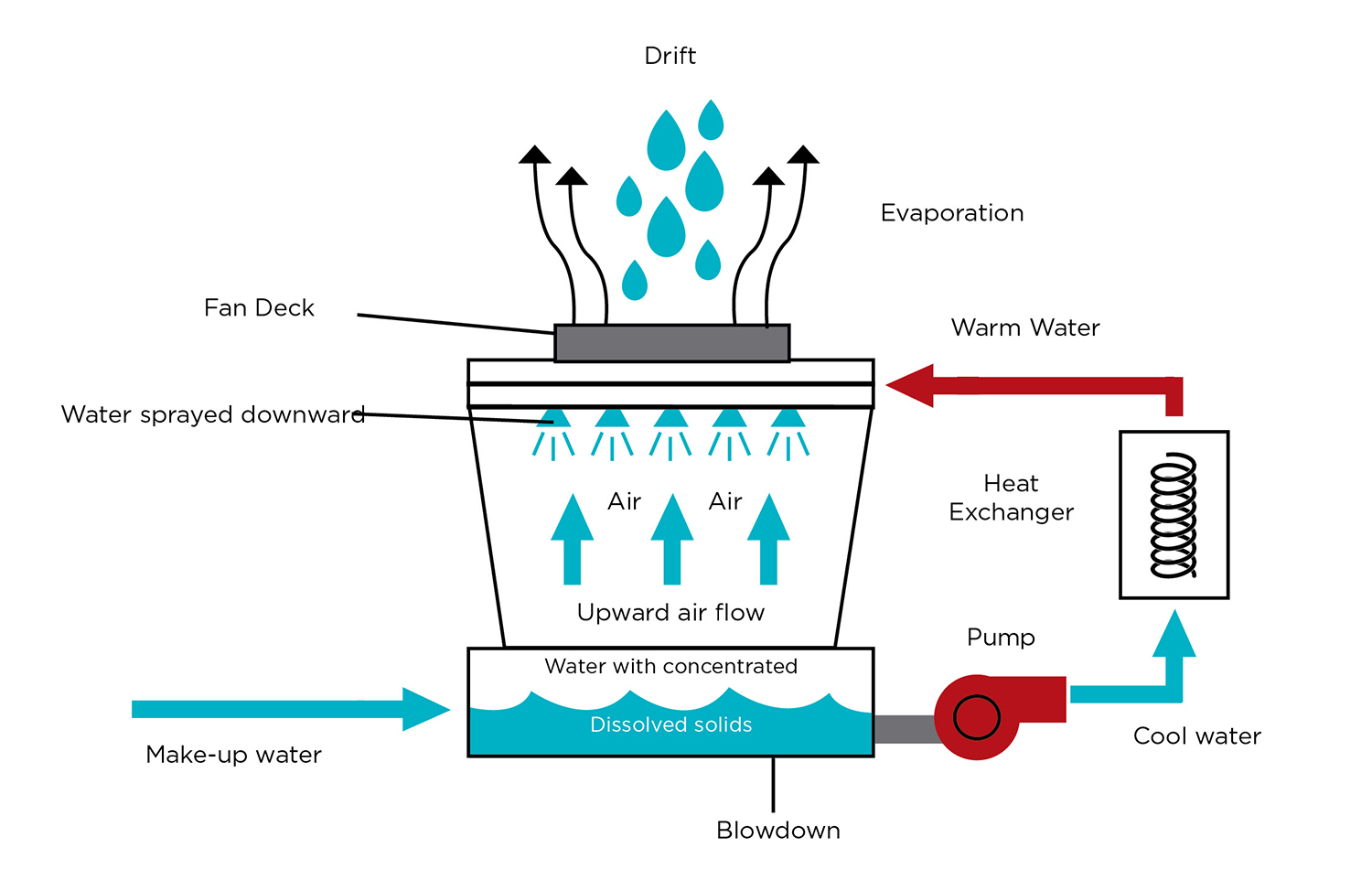

Water-cooled chiller systems rely on a large box-like unit called a cooling tower to reject heat collected by this system (see Figure 1). These cooling towers are the main culprits for water consumption in traditional data center designs. Cooling towers cool warm condenser water from the chillers by pulling ambient air in from the sides, which passes over a wet media, causing the water to evaporate. The cooling tower then rejects the heat by blowing hot, wet air out of the top. The cooled condenser water then returns back to the chiller to again accept heat to be rejected. A 1-megawatt (MW) data center will pump 855 gallons of condenser water per minute through a cooling tower, based on a design flow rate of 3 gallons per minute (GPM) per ton.

Cooling towers “consume” or lose water through evaporation, blow down, and drift (see Figure 2). Evaporation is caused by the heat actually removed from the condenser water loop. Typical design practice allows evaporation to be estimated at 1% of the cooling tower water flow rate, which equates to 8.55 GPM in a fairly typical 1-MW system. Blow down describes the replacement cycle, during which the cooling tower dumps condenser water to eliminate minerals, dust, and other contaminants. Typical design practices allow for blow down to be estimated at 0.5% of the condenser water flow rate, though this could vary widely depending on the water treatment and water quality. In this example, blow down would be about 4.27 GPM. Drift describes the water that is blown away from the cooling tower by wind or from the fan. Typical design practices allow drift to be estimated at 0.005%, though poor wind protection could increase this value. In this example, drift would be practically negligible.

In total, a 1-MW data center using traditional cooling methods would use about 6.75 million gallons of water per year.

CHILLERLESS ALTERNATIVES

Many data centers are adopting new chillerless cooling methods that are more energy efficient and use less water than the chiller and cooling tower combinations. These technologies still reject heat to the atmosphere using cooling towers. However, chillerless cooling methodologies incorporate an economizer that utilizes outdoor air, which means that water is not evaporated all day long or even every day.

Some data centers use direct air cooling, which introduces outside air to the data hall, where it directly cools the IT gear without any conditioning. Christian Belady, Microsoft’s general manager for Data Center Services, once demonstrated the potential of this method by running servers for long periods in a tent. Climate, and more importantly, an organization’s willingness to accept risk of IT equipment failure due to fluctuating temperatures and airborne particulate contamination limited the use of this unusual approach. The majority of organizations that use this method do so in combination with other cooling methods.

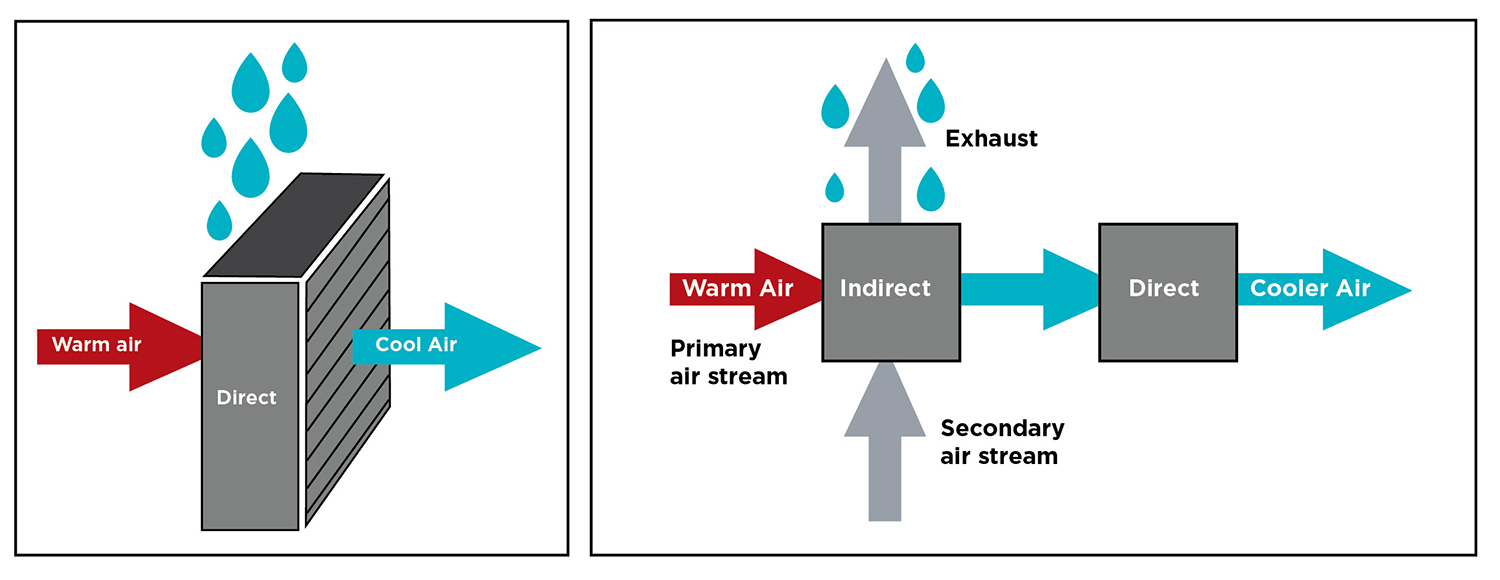

Direct evaporative cooling employs outside air that is cooled by a water-saturated medium or via misting. A blower circulates this air to cool the servers (see Figure 3). This approach, while more common than direct outside air cooling, still exposes IT equipment to risk from outside contaminants from external events like forest fires, dust storms, agricultural activity, or construction, which can impair server reliability. These contaminants can be filtered, but many organizations will not tolerate a contamination risk.

Some data centers use what is called indirect evaporative cooling. This process uses two air streams: a closed-loop air supply for IT equipment and an outside air stream that cools the primary air supply. The outside (scavenger) air stream is cooled using direct evaporative cooling. The cooled secondary air stream goes through a heat exchanger, where it cools the primary air stream. A fan circulates the cooled primary air stream to the servers.

WATERLESS ALTERNATIVES

Some existing data center cooling technologies do not evaporate water at all. Air-cooled chilled water systems do not include evaporative cooling towers. These systems are closed loop and do not use makeup water; however, they are much less energy efficient than nearly all the other cooling options, which may offset any water savings of this technology. Air-cooled systems can be fitted with water sprays to provide evaporative cooling to increase capacity and or increase cooling efficiency, but this approach is somewhat rare in data centers.

The direct expansion (DX) computer room air conditioner (CRAC) system includes a dry cooler that rejects heat via an air-to-refrigerant heat exchanger. These types of systems do not evaporate water to reject heat. Select new technologies utilize this equipment with a pumped refrigerant economizer that makes the unit capable of cooling without the use of the compressor. The resulting compressorless system does not evaporate water to cool air either, which improves both water and energy efficiency. Uptime Institute has seen these technologies operate at power usage efficiencies (PUE) of approximately 1.40, even while in full DX cooling mode, and they meet California’s strict Title 24 Building Energy Efficiency Standards.

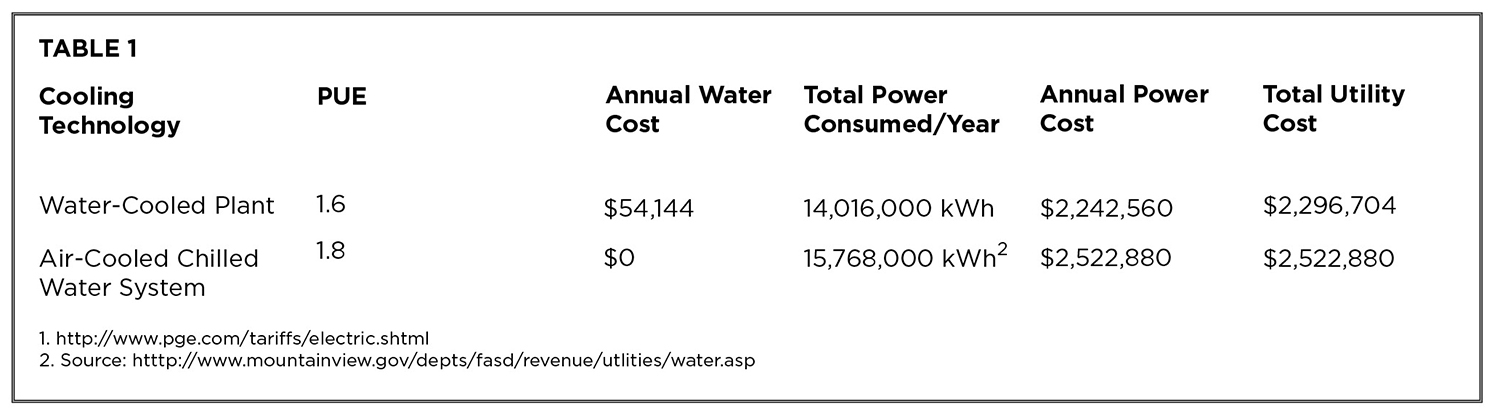

Table 1. Energy, water, and resource costs and consumption compared for generic cooling technologies.

Table 1 compares a typical water-cooled chiller system to an air-cooled chilled water system in a 1-MW data center, assuming that the water-cooled chiller plant operates at a PUE of 1.6 and the air-cooled chiller plant operates at a PUE of 1.8 with electric rates at $0.16/kilowatt-hour (kWh) and water rates are $6/unit, with one unit being defined as 748 gallons.

The table shows that although air-cooled chillers do not consume any water they can still cost more to operate over the course of a year because water, even though a potentially scarce resource, is still relatively cheap for data center users compared to power. It is crucial to evaluate the potential offsets between energy and cooling during the design process. This analysis does not include considerations for the upstream costs or resource consumption associated with water production and energy production. However, these should also be weighed carefully against a data center’s sustainability goals.

LEADING BY EXAMPLE

Some prominent data centers using alternative cooling methods include:

• Vantage Data Centers’ Quincy, WA, site uses Munters Indirect Evaporative Cooling systems.

• Rackspace’s London data center and Digital Realty’s Profile Park facility in Dublin use roof-mounted indirect outside air technology coupled with evaporative cooling from ExCool.

• A first phase of Facebook’s Prineville, OR, data center uses direct evaporative cooling and humidification. Small nozzles attached to water pipes spray a fine mist across the air pathway, cooling the air and adding humidity. In a second phase, Facebook uses a dampened media.

• Yahoo’s upstate New York data center uses direct outside air cooling when weather conditions allow.

• Metronode, a telecommunications company in Australia, uses direct air cooling (as well as direct evaporative and DX for backup) in its facilities

• Dupont Fabros is utilizing recycled gray water for cooling towers in its Silicon Valley and Ashburn, VA, facilities. The municipal gray water supplies saves on water cost, reduces water treatment for the municipality, and reuses a less precious form of water.

Facebook reports that its Prineville cooling system uses 10% of the water of a traditional chiller and cooling tower system. ExCool claims that it requires roughly 260,000 gallons annually in a 1-MW data center, 3.3% of traditional data center water consumption, and the data centers using pumped refrigerant systems consume even less water—zero. These companies save water by eliminating evaporative technologies or by combining evaporative technologies with outside air economizers, meaning that they do not have to evaporate water 24×7.

DRAWBACKS TO LOW WATER COOLING SYSTEMS

These cooling systems can cost much more than traditional cooling systems. At current rates for water and electricity, return on investment (ROI) on these more expensive systems can take years to achieve. Compass Datacenters recently published a study showing the potential negative ROI for an evaporative cooling system.

These systems also tend take up a lot of space. For many data centers, water-cooled chiller plants make more sense because an owner can pack in a large capacity system in a relatively small footprint without modifying building envelopes.

There are also implications for data center owners who want to achieve Tier Certification. Achieving Concurrently Maintainable Tier III Constructed Facility Certification requires the isolation of each and every component of the cooling system without impact to design day cooling temperature. This means an owner needs to be able to tolerate the shutdown of cooling units, control systems, makeup water tanks and distribution, and heat exchangers. Fault Tolerance (Tier IV) requires the system to sustain operations without impact to the critical environment after any single but consequential event. While Uptime Institute has Certified many data centers that use newer cooling designs, they do add a level of complexity to the process.

Organizations also need to factor temperature considerations into their decision. If a data center is not prepared to run its server inlet air temperature at 22 degrees Celsius (72 degrees Fahrenheit) or higher, there is not much payback on the extra investment due to the fact that the potential for economization is reduced. Also, companies need to improve their computer room management, including optimizing airflow for efficient cooling, and potentially adding containment, which can drive up costs. Additionally, some of these cooling systems just won’t work in hot and humid climates.

As with any newer technology, alternative cooling systems present operations challenges. Organizations will likely need to implement new training to operate and maintain unfamiliar equipment configurations. Companies will need to conduct particularly thorough due diligence on new, proprietary vendors entering the mission critical data center space for the first time.

And last, there is significant apathy about water conservation across the data center industry as a whole. Uptime Institute survey data shows that less than one-third of data center operators track water usage or use the (WUE) metric. Furthermore, Uptime Institute’s 2015 Data Center Industry Survey found (see The Uptime Institute Journal, vol 6, p. 60) that data center operators ranked water conservation as a low priority.

But the volumes of water or power used by data centers make them easy targets for criticism. While there are good reasons to choose traditional water-cooled chilled water systems, especially when dealing with existing buildings, for new data center builds, owners should evaluate alternative cooling designs against overall business requirements, which might include sustainability factors.

Uptime Institute has invested decades of research toward reducing data center resource consumption. The water topic needs to be assessed within a larger context such as the holistic approach to efficient IT described in Uptime Institute’s Efficient IT programs. With this framework, data center operators can learn how to better justify and explain business requirements and demonstrate that they can be responsible stewards of our environment and corporate resources.

Matt Stansberry contributed to this article.

WATER SOURCES

Data centers can use water from almost any source, with the vast majority of those visited by Uptime Institute using municipal water, which typically comes from reservoirs. Other data centers use groundwater, which is precipitation that seeps down through the soil and is stored below ground. Data center operators must drill wells to access this water. However, drought and overuse are depleting groundwater tables worldwide. The United States Geological Survey has published a resource to track groundwater depletion in the U.S.

Other sources of water include rainfall, gray water, and surface water. Very few data centers use these sources for a variety of reasons. Because rainfall can be unpredictable, for instance, it is mostly collected and used as a secondary or supplemental water supply. Similarly only a handful of data centers around the world are sited near lakes, rivers, or the ocean, but those data center operators could pump water from these sources through a heat exchanger. Data centers also sometimes use a body of water for an emergency water source for cooling towers or evaporative cooling systems. Finally, gray water, which is partially treated wastewater, can be utilized as a non-potable water source for irrigation or cooling tower use. These water sources are interdependent and may be short in supply during a sustained regional drought.

Ryan Orr joined Uptime Institute in 2012 and currently serves as a senior consultant. He performs Design and Constructed Facility Certifications, Operational Sustainability Certifications, and customized Design and Operations Consulting and Workshops. Mr. Orr’s work in critical facilities includes responsibilities ranging from project engineer on major upgrades for legacy enterprise data centers, space planning for the design and construction of multiple new data center builds, and data center M&O support.

Keith Klesner is Uptime Institute’s Senior Vice President, North America. Mr. Klesner’s career in critical facilities spans 16 years and includes responsibilities ranging from planning, engineering, design, and construction to start-up and ongoing operation of data centers and mission critical facilities. He has a B.S. in Civil Engineering from the University of Colorado-Boulder and a MBA from the University of LaVerne. He maintains status as a professional engineer (PE) in Colorado and is a LEED Accredited Professional.