A Holistic Approach to Reducing Cost and Resource Consumption

Data center operators need to move beyond PUE and address the underlying factors driving poor IT efficiency.

By Matt Stansberry and Julian Kudritzki, with Scott Killian

Since the early 2000s, when the public and IT practitioners began to understand the financial and environmental repercussions of IT resource consumption, the data center industry has focused obsessively and successfully on improving the efficiency of data center facility infrastructure. Unfortunately, we have been focused on just the tip of the iceberg – the most visible, but smallest piece of the IT efficiency opportunity.

At the second Uptime Institute Symposium in 2007, Amory Lovins of the Rocky Mountain Institute stood on stage with Uptime Institute Founder Ken Brill and called on IT innovators and government agencies to improve server compute utilization, power supplies, and the efficiency of the software code itself.

But those calls to action fell on deaf ears, leaving power usage effectiveness (PUE) as the last vestige of the heady days when data center energy was top on the minds of industry executives, regulators, and legislators. PUE is an effective engineering ratio that data center facilities teams can use to capture baseline data and track the results of efficiency improvements to mechanical and electrical infrastructure. It is also useful for design teams comparing equipment or topology-level solutions. But, as industry adoption of PUE has expanded the metric is increasingly being misused as a methodology to cut costs and prove stewardship of corporate and/or environmental resources.

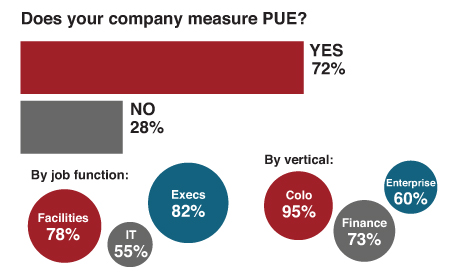

Figure 1. 82% of Senior Execs are tracking PUE and reporting those findings to their management. Source: Uptime Institute Data Center Industry Survey 2014

Feedback from the Uptime Institute Network around the world confirms Uptime Institute’s field experience that enterprise IT executives are overly focused on PUE. According to the Uptime Institute’s Annual Data Center Industry Survey, conducted January-April 2014, the vast majority of IT executives (82%) tracks PUE and reports that metric to their corporate management. By focusing on PUE, IT executives are spending effort and capital for diminishing returns and ignoring the underlying drivers of poor IT efficiency.

For nearly a decade, Uptime Institute has recommended that enterprise IT executives take a holistic approach to significantly reducing the cost and resource consumption of compute infrastructure.

Ken Brill identified the following as the primary culprits of poor IT efficiency as early as 2007:

- Poor demand and capacity planning within and across functions (business, IT, facilities)

- Significant failings in asset management (6% average server utilization, 56% facility utilization)

- Boards, CEOs, and CFOs not holding CIOs accountable for critical data center facilities’ CapEx and data center operational efficiency

Perhaps the industry was not ready to hear this governance message and the economics did not motivate broad response. Additionally, the furious pace at which data centers were being built distracted from the ongoing cost of IT service delivery. Since then, operational costs have continued to escalate as a result of insufficient attention being paid to the true cost of operations.

Rising energy, equipment, and construction costs and increased government scrutiny are compelling a mature management model that identifies and rewards improvements to the most glaring IT inefficiencies. At the same time, the primary challenges facing IT organizations are unchanged from almost 10 years ago. Select leading enterprises have taken it upon themselves to address these challenges. But the industry lacks a coherent mode and method that can be shared and adopted for full benefit of the industry.

A solution developed by and for the IT industry will be more functional and impactful than a coarse adaptation of other industries’ efficiency programs (manufacturing and mining have been suggested as potential models) or government intervention.

In this document, Uptime Institute presents a meaningful justification for unifying the disparate disciplines and efforts together, under a holistic plan, to radically reduce IT cost and resource consumption.

Multidisciplinary Approach Includes Siting, Design, IT, Procurement, Operations, and Executive Leadership

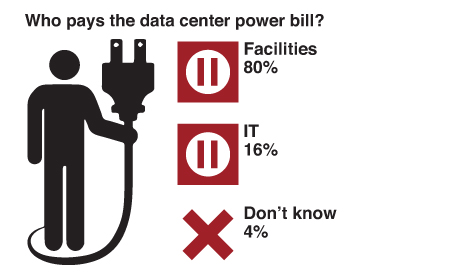

Historically, data center facilities management has driven IT energy efficiency. According to the Uptime Institute’s Annual Data Center Industry Survey (2011-2014), less than 20% of companies report that their IT departments pay the data center power bill, and the vast majority of companies allocate this cost to the facilities or real estate budgets. This lopsided financial arrangement fosters unaccountable IT growth, inaccurate planning, and waste (see Figure 2).

Figure 2. Less than 20% of companies report that their IT departments pay the data center power bill, and the vast majority of companies allocate this cost to the facilities or real estate budgets. Source: Uptime Institute Data Center Industry Survey 2012

The key to success for enterprises pursuing IT efficiency is to create a multidisciplinary energy management plan (owned by senior executive leadership) that includes the following:

Executive commitment to sustainable results

- A formal reporting relationship between IT and data center facilities management with a chargeback model that takes into account procurement and operations/maintenance costs

- Key performance indicators (KPIs) and mandated reporting for power, water, and carbon utilization

- A culture of continuous improvement with incentives and recognition for staff efforts

- Cost modeling of efficiency improvements for presentation to senior management

- Optimization of resource efficiency through ongoing management and operations

- Computer room management: rigorous airflow management and no bypass airflow

- Testing, documenting, and improving IT hardware utilization

- IT asset management: consolidating, decommissioning, and recycling obsolete hardware

- Managing software and hardware life cycles from procurement to disposal

Effective investment in efficiency during site planning and design phase of the data center

- Site-level considerations: utility sourcing, ambient conditions, building materials, and effective land use

- Design and topology that match business demands with maximum efficiency

- Effective monitoring and control systems

- Phased buildouts that scale to deployment cycles

Executive Commitment to Sustainable Results

Any IT efficiency initiative is going to be short-lived and less effective without executive authority to drive the changes across the organization. For both one-time and sustained savings, executive leadership must address the management challenges inherent in any process improvement. Many companies are unable to effectively hold IT accountable for inefficiencies because financial responsibility for energy costs lies instead with facilities management.

Uptime Institute has challenged the industry for years to restructure company financial reporting so that IT has direct responsibility for its own energy and data center costs. Unfortunately, there has been very little movement toward that kind of arrangement, and industry-wide chargeback models have been flimsy, disregarded, or nonexistent.

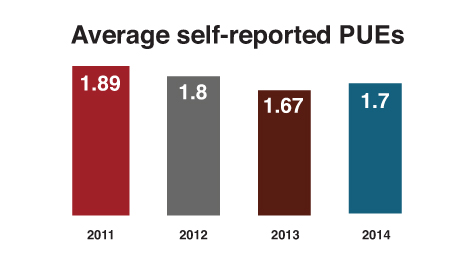

Figure 3. Average PUE decreased dramatically from 2007-2011, but efficiencies have been harder to find since then. Source: Uptime Institute Data Center Industry Survey 2014

Perhaps we’ve been approaching this issue from the wrong angle. Instead of moving the data center’s financial responsibilities over to IT, some organizations are moving the entire Facilities team and costs wholesale into a single combined department.

In one example, a global financial firm with 22 data centers across 7 time zones recently merged its Facilities team into its overall IT infrastructure and achieved the following results:

- Stability: an integrated team that provides single entity accountability and continuous improvement

- Energy Efficiency: holistic approach to energy from chips to chillers

- Capacity: design and planning much more closely aligned with IT requirements

This globally integrated organization with single-point ownership and accountability established firm-wide standards for data center design and operation and deployed an advanced tool set that integrates facilities with IT.

This kind of cohesion is necessary for a firm to conduct effective cost modeling, implement tools like DCIM, and overcome cultural barriers associated with a new IT efficiency program.

Executive leadership should consider the following when launching a formal energy management program:

- Formal documentation of responsibility, reporting, strategy, and program implementation

- Cost modeling and reporting on operating expenses, power cost, carbon cost per VM (virtual machine),

and chargeback implementation - KPIs and targets: power, water, carbon emissions/offsets, hardware utilization, and cost reduction

- DCIM implementation: dashboard that displays all KPIs and drivers that leadership deems important

for managing to business objectives - Incentives and recognition for staff

Operational Efficiency

Regardless of an organization’s data center design topology, there are substantial areas in facility and IT management where low-cost improvements will reap financial and organizational rewards. On the facilities management side, Uptime Institute has written extensively about the simple fixes that prevent bypass airflow, such as ensuring Cold Aisle/Hot Aisle layout in data centers, installing blanking panels in racks, and sealing openings in the raised floor.

Figure 4. Kaiser Permanente won a Brill Award for Efficient IT in 2014 for improving operational efficiency across its legacy facilities.

In a 2004 study, Uptime Institute reported that the cooling capacity of the units found operating in a large sample of data centers was 2.6 times what was required to meet the IT requirements—well beyond any reasonable level of redundant capacity. In addition, an average of only 40% of the cooling air supplied to the data centers studied was used for cooling IT equipment. The remaining 60% was effectively wasted capacity, required only because of mismanaged airflow.

More recent industry data shows that the average ratio of operating nameplate cooling capacity has increased from 2.6 to 3.9 times the IT requirement. Disturbingly, this trend is going in the wrong direction.

Uptime Institute has published a comprehensive, 29-step guide to data center cooling best practices to help data center managers take greater advantage of the energy savings opportunities available while providing improved cooling of IT systems: Implementing Data Center Cooling Best Practices

Health-care giant Kaiser Permanente recently deployed many of those steps across four legacy data centers in its portfolio, saving approximately US$10.5 million in electrical utility costs and averting 52,879 metric tons of carbon dioxide (CO2). Kaiser Permanente won a Brill Award for Efficient IT in 2014 for its leadership in this area (see Figure 4).

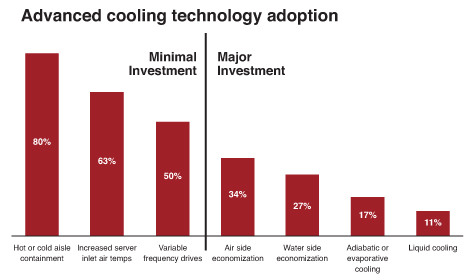

According to Uptime Institute’s 2014 survey data, a large percentage of companies are tackling the issues around inefficient cooling (see Figure 5). Unfortunately, there is not a similar level of adoption for IT operations efficiency.

Figure 5. According to Uptime Institute’s 2014 survey data, a large percentage of companies are tackling the issues around inefficient cooling.

The Sleeping Giant: Comatose IT Hardware

Wasteful, or comatose, servers hide in plain sight in even the most sophisticated IT organizations. These servers, abandoned by application owners and users but still racked and running, represent a triple threat in terms of energy waste—squandering power at the plug, wasting data center facility capacity, and incurring software licensing and hardware maintenance costs.

Uptime Institute has maintained that an estimated 15-20% of servers in data centers are obsolete, outdated, or unused, and that remains true today.

The problem is likely more widespread than previously reported. According to Uptime Institute research, only 15% of respondents believe their server populations include 10% or more comatose machines. Yet, nearly half (45%) of survey respondents have no scheduled auditing to identify and remove unused machines.

Uptime Institute launched the Server Roundup contest in October 2011 to raise awareness about the removal and recycling of comatose and obsolete IT equipment and reduce data center energy use. Uptime Institute invited companies around the globe to help address and solve this problem by participating in the Server Roundup.

The financial firm Barclays removed nearly 10,000 servers in 2013, which directly consumed an estimated 2.5 megawatts (MW) of power. Left on the wire, the power bill would be approximately US$4.5 million higher than it is today. Installed together, these servers would fill up 588 server racks. Barclays also saved approximately US$1.3 million on legacy hardware maintenance costs, reduced the firm’s carbon footprint, and freed up more than 20,000 network ports and 3,000 SAN ports due to this initiative (see Figure 6).

Barclays was a Server Roundup winner in 2012 as well, removing 5,515 obsolete servers, with power savings of 3 MW, and US$3.4 million annualized savings for power, and a further US$800,000 savings in hardware maintenance.

Figure 6. The Server Roundup sheds light on a serious topic in a humorous way. Barclays saved over $US10 million in two years of dedicated server decommissioning.

In two years, Barclays has removed nearly 15,000 servers and saved over US$10 million. Server Roundup overwhelmingly proves that disciplined hardware decommissioning can provide a significant financial impact. Yet, despite these huge savings and intangible benefits to the overall IT organization, many firms are not applying the same level of diligence and discipline to a server decommissioning plan, as noted previously.

This is the crux of the data center efficiency challenge ahead—convincing more organization of the massive return on investment in addressing IT instead of relentlessly pursuing physical infrastructure efficiency.

Organizations need to hold IT operations teams accountable to root out inefficiencies, of which comatose servers are only the most egregious example.

Other systemic IT inefficiencies include:

- Neglected application portfolios with outdated, duplicate,or abandoned software programs

- Low-risk activities and test and development applications consuming high-resiliency, resource-intensive capacity

- Server hugging—not deploying workloads to solutions with highly efficient, shared infrastructure

- Fragile legacy software applications requiring old, inefficient, outdated hardware—and often duplicate IT hardware installations—to maintain availability

But, in order to address any of these systemic problems, companies need to secure a commitment from executive leadership by taking a more activist role than previously assumed.

Resource Efficiency in the Design Phase

Some progress has been made, as the majority of current data center designs are now being engineered toward systems efficiency. By contrast, enterprises around the globe operate legacy data centers, and these existing sites by far present the biggest opportunity for improvement and financial return on efficiency investment.

That said, IT organizations should apply the following guidelines to resource efficiency in the design phase:

Take a phased approach, rather than building out vast expanses of white space at once and running rooms for years with very little IT gear. Find a way to shrink the capital project cycle; create a repeatable, scalable model.

Implement an operations strategy in the pre-design, design, and construction phases to improve operating performance. (See Start with the End in Mind)

Define operating conditions that approach the limits of IT equipment thermal guidelines and exploit ambient conditions to reduce cooling load.

Data center owners should pursue resource efficiency in all capital projects, within the constraints of their business demands. The vast majority of companies will not be able to achieve the ultra-low PUEs of web-scale data center operators. Nor should they sacrifice business resiliency or cost effectiveness in pursuit of those kinds of designs—given that the opportunities to achieve energy and cost savings in the operations (rather than through design) are massive.

The lesson often overlooked when evaluating web-scale data centers is that IT in these organizations is closely aligned with the power and cooling topology. The web-scale companies have an IT architecture that allows low equipment-level redundancy and a homogeneous IT environment conducive to custom, highly utilized servers. These efficiency opportunities are not available to many enterprises. However, most enterprises can emulate, if not the actual design, then the concept of designing to match the IT need. Approaches include phasing, varied Tier data centers (e.g., test and development and low-criticality functions can live in Tier I and II rooms; while business-critical activity is in Tier III and IV rooms), and increased asset utilization.

Conclusion

Senior executives understand the importance of reporting and influencing IT energy efficiency, and yet they are currently using inappropriate tools and metrics for the job. The misguided focus on infrastructure masks, and distracts them from addressing, the real systemic inefficiencies in most enterprise organizations.

The data center design community should be proud of its accomplishments in improving power and cooling infrastructure efficiency, yet the biggest opportunities and savings can only be achieved with an integrated and multi-disciplined operations and management team. Any forthcoming gains in efficiency will depend on documenting data center cost and performance, communicating that data in business terms to finance and other senior management within the company, and getting the hardware and software disciplines to take up the mantle of pursuing efficient IT on a holistic basis.

There is increasing pressure for the data center industry to address efficiency in a systematic manner, as more government entities and municipalities are contemplating green IT and energy mandates.

In the 1930s, the movie industry neutralized a patchwork of onerous state and local censorship efforts (and averted the threat of federal action) by developing and adopting its own set of rules: the Motion Picture Production Code. These rules, often called the Hays Code, evolved into the MPAA film ratings system used today, a form of voluntary self-governance that has helped the industry to successfully avoid regulatory interference for decades.

Uptime Institute will continue to produce research, guidelines, and assessment models to assist the industry in self-governance and continuous improvement. Uptime Institute will soon release supplemental papers on relevant topics such as effective reporting and chargebacks.

Additional Resources

Implementing data center cooling best practices

Server Decommissioning as a Discipline

2014 Uptime Institute Data Center Industry Survey Results

The High Cost of Chasing Lower PUEs

In 2007, Uptime Institute surveyed its Network members (a user group of large data center owners and operators), and found an average PUE of 2.5. The average PUE improved from 2.50 in 2007 to 1.89 in 2011 in Uptime Institute’s data center industry survey.

So how did the industry make those initial improvements?

A lot of these efforts were simple fixes that prevented bypass airflow, such as ensuring Cold Aisle/Hot Aisle arrangement in data centers, installing blanking panels in racks, and sealing cutouts. Many facilities teams appear to have done what they can to improve existing data center efficiency, short of making huge capital improvements.

From 2011 to today, the average self-reported PUE has only improved from 1.89 to 1.70. The biggest infrastructure efficiency gains happened 5 years ago, and further improvements will require significant investment and effort, with increasingly diminishing returns.

In a 2010 interview, Christian Belady, architect of the PUE metric, said, “The job is never done, but if you focus on improving in one area very long you’ll start to get diminishing returns. You have to be conscious of the cost pie, always be conscious of where the bulk of the costs are.”

But executives are pressuring for more. Further investments in technologies and design approaches may provide negative financial payback and zero improvement of the systemic IT efficiency problems.

What Happens If Nothing Happens?

In some regions, energy costs are predicted to increase by 40% by 2020. Most organizations cannot afford such a dramatic increase to the largest operating cost of the data center.

For finance, information services, and other industries, IT is the largest energy consumer in the company. Corporate sustainability teams have achieved meaningful gains in other parts of the company but seek a meaningful way to approach IT.

China is considering a government categorization of data centers based upon physical footprint. Any resulting legislation will ignore the defining business, performance, and resource consumption characteristics of a data center.

In South Africa, the carbon tax has reshaped the data center operations cost structure for large IT operators and visibly impacted the bottom line.

Government agencies will step in to fill the void and create a formula- or metric-based system for demanding efficiency improvement, which will not take into account an enterprise’s business and operating objectives. For example, the U.S. House of Representatives recently passed a bill (HR 2126) that would mandate new energy efficiency standards in all federal data centers.

Matt Stansberry is director of Content and Publications for the Uptime Institute and also serves as program director for the Uptime Institute Symposium, an annual spring event that brings together 1,500 stakeholders in enterprise IT, data center facilities, and corporate real estate to deal with the critical issues surrounding enterprise computing. He was formerly editorial director for Tech Target’s Data Center and Virtualization media group, and was managing editor of Today’s Facility Manager magazine. He has reported on the convergence of IT and Facilities for more than a decade.

Matt Stansberry is director of Content and Publications for the Uptime Institute and also serves as program director for the Uptime Institute Symposium, an annual spring event that brings together 1,500 stakeholders in enterprise IT, data center facilities, and corporate real estate to deal with the critical issues surrounding enterprise computing. He was formerly editorial director for Tech Target’s Data Center and Virtualization media group, and was managing editor of Today’s Facility Manager magazine. He has reported on the convergence of IT and Facilities for more than a decade.

Julian Kudritzki joined the Uptime Institute in 2004 and currently serves as COO. He is responsible for the global proliferation of Uptime Institute standards. He has supported the founding of Uptime Institute offices in numerous regions, including Brasil, Russia, and North Asia. He has collaborated on the development of numerous Uptime Institute publications, education programs, and unique initiatives such as Server Roundup and FORCSS. He is based in Seattle, WA.

Julian Kudritzki joined the Uptime Institute in 2004 and currently serves as COO. He is responsible for the global proliferation of Uptime Institute standards. He has supported the founding of Uptime Institute offices in numerous regions, including Brasil, Russia, and North Asia. He has collaborated on the development of numerous Uptime Institute publications, education programs, and unique initiatives such as Server Roundup and FORCSS. He is based in Seattle, WA.

Scott Killian joined the Uptime Institute in 2014 and currently serves as VP for Efficient IT Program. He surveys the industry for current practices, and develops new products to facilitate industry adoption of best practices. Mr. Killian directly delivers consulting at the site management, reporting, and governance levels. He is based in Virginia.

Scott Killian joined the Uptime Institute in 2014 and currently serves as VP for Efficient IT Program. He surveys the industry for current practices, and develops new products to facilitate industry adoption of best practices. Mr. Killian directly delivers consulting at the site management, reporting, and governance levels. He is based in Virginia.

Prior to joining Uptime Institute, Mr. Killian led AOL’s holistic resource consumption initiative, which resulted in AOL winning two Uptime Institute Server Roundups for decommissioning more than 18,000 servers and reducing operating expenses more than US$6 million. In addition, AOL received three awards in the Green Enterprise IT (GEIT) program. AOL accomplished all this in the context of a five-year plan developed by Mr. Killian to optimize data center resources, which saved ~US$17 million annually.

Want to stay informed on this topic, provide us your contact information and we will add your to our Efficient IT communications group.