Economizers in Tier Certified Data Centers

Achieving the efficiency and cost savings benefits of economizers without compromising Tier level objectives

By Keith Klesner

In their efforts to achieve lower energy use and greater mechanical efficiency, data center owners and operators are increasingly willing to consider and try economizers. At the same time, many new vendor solutions are coming to market. In Tier Certified data center environments, however, economizers, just as any other significant infrastructure system, must operate consistently with performance objectives.

Observation by Uptime Institute consultants indicates that roughly one-third of new data center construction designs include an economizer function. Existing data centers are also looking at retrofitting economizer technologies to improve efficiency and lower costs. Economizers use external ambient air to help cool IT equipment. In some climates, the electricity savings from implementing economizers can be so significant that the method has been called “free cooling.” But, all cooling solutions require fans, pumps, and/or other systems that draw power; thus, the technology is not really free and the term economizers is more accurate.

When The Green Grid surveyed large 2,500-square foot data centers in 2011, 49% of the respondents (primarily U.S. and European facilities) reported using economizers and another 24% were considering them. In the last 4 years, these numbers have continued to grow. In virtually all climatic regions, adoption of these technologies appears to be on the rise. Uptime Institute has seen an increase in the use of economizers in both enterprise and commercial data centers, as facilities attempt to lower their power usage effectiveness (PUE) and increase efficiency. This increased adoption is due in large part to fears about rising energy costs (predicted to grow significantly in the next 10 years). In addition, outside organizations, such as ASHRAE, are advocating for greater efficiencies, and internal corporate and client sustainability initiatives at many organizations drive the push to be more efficient and reduce costs.

The marketplace includes a broad array of economizer solutions:

• Direct air cooling: Fans blow cooler outside air into a data center, typically through filters

• Indirect evaporative cooling: A wetted medium or water spray promotes evaporation to supply cool air into a data center

• Pumped refrigerant dry coolers: A closed-loop fluid, similar to an automotive radiator, rejects heat to external air and provides cooling to the data center

• Water-side economizing: Traditional cooling tower systems incorporating heat exchangers bypass chillers to cool the data center

IMPLICATIONS FOR TIER

Organizations that plan to utilize an economizer system and desire to attain Tier Certification must consider how best to incorporate these technologies into a data center in a way that meets Tier requirements. For example, Tier III Certified Constructed Facilities have Concurrently Maintainable critical systems. Tier IV Certified Facilities must be Fault Tolerant.

Some economizer technologies and/or their implementation methods can affect critical systems that are integral to meeting Tier Objectives. For instance, many technologies were not originally designed for data center use, and manufacturers may not have thought through all the implications.

For example, true Fault Tolerance is difficult to achieve and requires sophisticated controls. Detailed planning and testing is essential for a successful implementation. Uptime Institute does not endorse or recommend any specific technology solution or vendor; each organization must make its own determination of what solution will meet the business, operating, and environmental needs of its facility.

ECONOMIZER TECHNOLOGIES

Economizer technologies include commercial direct air rooftop units, direct air plus evaporative systems, indirect evaporative cooling systems, water-side economizers, and direct air plus dry cooler systems.

DIRECT AIR

Direct air units used as rooftop economizers are often the same units used for commercial heating, ventilation, and air-conditioning (HVAC) systems. Designed for office and retail environments, this equipment has been adapted for 24 x 7 applications. Select direct air systems also use evaporative cooling, but all of them combine of them combine direct air and multi-stage direct expansion (DX) or chilled water. These units require low capital investment because they are generally available commercially, service technicians are readily available, and the systems typically consume very little water. Direct air units also yield good reported PUE (1.30–1.60).

On the other hand, commercial direct air rooftop units may require outside air filtration, as many units do not have adequate filtration to prevent the introduction of outside air directly into critical spaces, which increases the risk of particulate contamination.

Outside air units suitable for mission critical spaces require the capability of 100% air recirculation during certain air quality events (e.g., high pollution events and forest or brush fires) that will temporarily negate the efficiency gains of the units.

Figure 1. A direct expansion unit with an air-side economizer unit provides four operating modes including direct air, 100% recirculation, and two mixed modes. It is a well-established technology, designed to go from full stop (no power) to full cooling in 120 seconds or less, and allowing for PUE as low as 1.30-1.40.

Because commercial HVAC systems do not always meet the needs of mission critical facilities, owners and operators must identify the design limitations of any particular solution. Systems may integrate critical cooling and the air handling unit or provide a mechanical solution that incorporates air handling and chilled water. These units will turn off the other cooling mechanism when outside air cooling permits. Units that offer dual modes are typically not significantly more expensive. These commercial units require reliable controls that ensure that functional settings align with the mission critical environment. It is essential that the controls sequence be dialed in before performing thorough testing and commissioning of all control possibilities (see Figure 1). Commercial direct air rooftop units have been used successfully in Tier III and Tier IV applications (see Figure 2).

Figure 2. Chilled water with air economizer and wetting media provides nine operating modes including direct air plus evaporative cooling. With multiple operating modes, the testing regimen is extensive (required for all modes).

A key step in adapting commercial units to mission critical applications is considering the data center’s worst-case scenario. Most commercial applications are rated at 95°F (35°C), and HVAC units will typically allow some fluctuation in temperature and discomfort for workers in commercial settings. The temperature requirements for data centers, however, are more stringent. Direct air or chilled water coils must be designed for peak day—the location’s ASHRAE dry bulb temperature and/or extreme maximum wet bulb temperature. Systems must be commissioned and tested in Tier demonstrations for any event that would require 100% recirculation. If the unit includes evaporative cooling, makeup (process) water must meet all Tier requirements or the evaporative capacity must be excluded from the Tier assessment.

In Tier IV facilities, Continuous Cooling is required, including during any transition from utility power to engine generators. Select facilities have achieved Continuous Cooling using chilled water storage. In the case of one Tier IV site, the rooftop chilled water unit included very large thermal storage tanks to provide Continuous Cooling via the chilled water coil.

Controller capabilities and building pressure are also considerations. As these are commercial units, their controls are usually not optimized for the transition of power from the utility to the engine-generator sets and back. Typically, over- or under-pressure imbalance in a data center increases following a utility loss or mode change due to outside air damper changes and supply and exhaust fans starting and ramping up. This pressure can be significant. Uptime Institute consultants have even seen an entire wall blow out from over-pressure in a data hall. Facility engineers have to adjust controls for the initial building pressure and fine-tune them to adjust to the pressure in the space.

To achieve Tier III objectives, each site must determine if a single or shared controller will meet its Concurrent Maintainability requirements. In a Tier IV environment, Fault Tolerance is required in each operating mode to prevent a fault from impacting the critical cooling of other units. It is acceptable to have multiple rooftop units, but they must not be on a single control or single weather sensor/control system component. It is important to have some form of distributed or lead-lag (master/slave) system to control these components and enabling them to be operate in a coordinated fashion with no points of commonality. If any one component fails, the master control system will switch to the other unit, so that a fault will not impact critical cooling. For one Tier IV project demonstration, Uptime Institute consultants found additional operating modes while on site. Each required additional testing and controls changes to ensure Fault Tolerance.

DIRECT AIR PLUS EVAPORATIVE SYSTEMS

One economizer solution on the market, along with many other similar designs, involves a modular data center with direct air cooling and wetted media fed from a fan wall. The fan wall provides air to a flooded Cold Aisle in a layout that includes a contained Hot Aisle. This proprietary solution is modular and scalable, with direct air cooling via an air optimizer. This system is factory built with well-established performance across multiple global deployments. The systems have low reported PUEs and excellent partial load efficiency. Designed as a prefabricated modular cooling system and computer room, the system comes with a control algorithm that is designed for mission critical performance.

These units are described as being somewhat like a “data center in a box” but without the electrical infrastructure, which must be site designed to go with the mechanical equipment. Cost may be another disadvantage, as there have been no deployments to date in North America. In operation, the system determines whether direct air or evaporative cooling is appropriate, depending upon external temperature and conditions. Air handling units are integrated into the building envelope rather than placed on a rooftop.

Figure 3. Bladeroom’s prefabricated modular data center uses direct air with DX and evaporative cooling.

One company has used a prefabricated modular data center solution with integrated cooling optimization between indirect, evaporative, and DX cooling in Tier III facilities. In these facilities, a DX cooling system provides redundancy to the evaporative cooler. If there is a critical failure of the water supply to the evaporative cooler (or the water pump, which is measured by a flow switch), the building management system starts DX cooling and puts the air optimizer into full recirculation mode. In this set up, from a Tier objective perspective, the evaporative system and water system supporting it are not critical systems. Fans are installed in an N+20% configuration to provide resilience. The design plans for DX cooling at less than 6% of the year at the installations in Japan and Australia and acts as redundant mechanical cooling for the remainder of the year, able to meet 100% of the IT capacity. The redundant mechanical cooling system itself is an N+1 design (See Figures 3 and 4).

Figure 4. Supply air from the Bladeroom “air optimizer” brings direct air with DX and evaporative cooling into flooded cold aisles in the data center.

This data center solution has seen multiple Tier I and Tier II deployments, as well as several Tier III installations, providing good efficiency results. Achieving Tier IV may be difficult with this type of DX plus evaporative system because of Compartmentalization and Fault Tolerant capacity requirements. For example; Compartmentalization of the two different air optimizers is a challenge that must be solved; the louvers and louver controls in the Cold Aisles are not Fault Tolerant and would require modification, and Compartmentalization of electrical controls has not been incorporated into the concept (for example, one in the Hot Aisle and one in the Cold Aisle).

INDIRECT EVAPORATIVE COOLING SYSTEMS

Another type of economizer employs evaporative cooling to indirectly cool the data center using a heat exchanger. There are multiple suppliers of these types of systems. New technologies incorporate cooling media of hybrid plastic polymers or other materials. This approach excludes outside air from the facility. The result is a very clean solution; pollutants, over-pressure/under-pressure, and changes in humidity from outside events like thunderstorms are not concerns. Additionally, a more traditional, large chilled water plant is not necessary (although makeup water storage will be needed) because chilled water is not required.

As with many economizing technologies, greater efficiency can enable facilities to avoid upsizing the electrical plant to accommodate the cooling. A reduced mechanical footprint may mean lower engine-generator capacity, fewer transformers and switchgear, and an overall reduction in the often sizable electrical generation systems traditionally seen in a mission critical facility. For example, one data center eliminated an engine generator set and switchgear, saving approximately US$1M (although the cooling units themselves were more expensive than some other solutions on the market).

The performance of these types of systems is climate dependent. No external cooling systems are generally required in more northern locations. For most temperate and warmer climates some supplemental critical cooling will be needed for hotter days during the year. The systems have to be sized appropriately; however, a small supplemental DX top-up system can meet all critical cooling requirements even in warmer climates. These cooling systems have produced low observed PUEs (1.20 or less) with good partial load PUEs. Facilities employing these systems in conjunction with air management systems and Hot Aisle containment to supply air inlet temperatures up to the ASHRAE recommendation of 27°C (81°F) have achieved Tier III certification with no refrigeration or DX systems needed.

Indirect air/evaporative solutions have two drawbacks, a relative lack of skilled service technicians to service the units and high water requirements. For example, one fairly typical unit on the market can use approximately 1,500 cubic meters (≈400,000 gallons) of water per megawatt annually. Facilities need to budget for water treatment and prepare for a peak water scenario to avoid an impactful water shortage for critical cooling.

Makeup water storage must meet Tier criteria. Water treatment, distribution, pumps, and other parts of the water system must meet the same requirements as the critical infrastructure. Water treatment is an essential, long-term operation performed using methods such as filtration, reverse osmosis, or chemical dosing. Untreated or insufficiently treated water can potentially foul or scale equipment, and thus, water-based systems require vigilance.

It is important to accurately determine how much makeup water is needed on site. For example, a Tier III facility requires 12 hours of Concurrently Maintainable makeup water, which means multiple makeup water tanks. Designing capacity to account for a worst-case scenario can mean handling and treating a lot of water. Over the 20-30 year life of a data center, thousands of gallons (tens of cubic meters) of stored water may be required, which becomes a site planning issue. Many owners have chosen to exceed 12 hours for additional risk avoidance. For more information, refer to Accredited Tier Designer Technical Paper Series: Makeup Water).

WATER-SIDE ECONOMIZERS

Water-side economizer solutions combine a traditional water-cooled chilled water plant with heat exchangers to bypass chiller units. These systems are well known, which means that skilled service technicians are readily available. Data centers have reduced mechanical plant power consumption from 10-25% using water-side economizers. Systems of this type provide perhaps the most traditional form of economizer/mechanical systems power reduction. The technology uses much of the infrastructure that is already in place in older data centers, so it can be the easiest option to adopt. These systems introduce heat exchangers, so cooling comes directly from cooling towers and bypasses chiller units. For example, in a climate like that in the northern U.S., a facility can run water through a cooling tower during the winter to reject heat and supply the data center with cool water without operating a chiller unit.

Controls and automation for transitions between chilled water and heat exchanger modes are operationally critical but can be difficult to achieve smoothly. Some operators may bypass the water-side economizers if they don’t have full confidence in the automation controls. In some instances, operators may choose not to make the switch when a facility is not going to utilize more than four or six hours of economization. Thus energy savings may actually turn out to be much less than expected.

Relatively high capital expense (CapEx) investment is another drawback. Significant infrastructure must be in place on Day 1 to account for water consumption and treatment, heat exchangers, water pumping, and cooling towers. Additionally, the annualized PUE reduction that results from water-side systems is often not significant, most often in the 0.1–0.2 range. Data center owners will want a realistic cost/ROI analysis to determine if this cooling approach will meet business objectives.

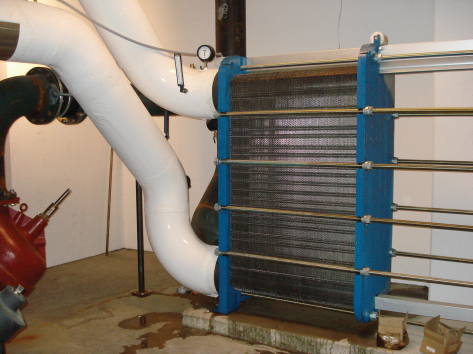

Water-side economizers are proven in Tier settings and are found in multiple Tier III facilities. Tier demonstrations are focused on the critical cooling system, not necessarily the economizer function. Because the water-side economizer itself is not considered critical capacity, Tier III demonstrations are performed under chiller operations, as with typical rooftop units. Demonstrations also include isolation of the heat exchanger systems and valves, and economizer control of functions is critical (see Figures 5 and 6). However, for Tier IV settings where Fault Tolerance is required, the system must be able to respond autonomously. For example, one data center in Spain had an air-side heat recovery system with a connected office building. If an economizer fault occurred, the facility would need to ensure it would not impact the data center. The solution was to have a leak detection system that would shut off the economizer to maintain critical cooling of the data hall in isolation.

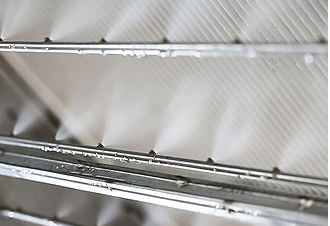

Figure 6. The cooling tower takes in hot air from the sides and blows hot, wet air out of the top, cooling the condenser water as it falls down the cooling tower. In operation it can appear that steam is coming off the unit, but this is a traditional cooling tower.

CRACs WITH PUMPED REFRIGERANT ECONOMIZER

Another system adds an economizer function to a computer room air conditioner (CRAC) unit using a pumped liquid refrigerant. In some ways, this technology operates similarly to standard refrigeration units, which use a compressor to convert a liquid refrigerant to a gas. However, instead of using a compressor, newer technology blows air across a radiator unit to reject the heat externally without converting the liquid refrigerant to a gas. This technology has been implemented and tested in several data centers with good results in two Tier III facilities.

The advantages of this system include low capital cost compared to many other mechanical cooling solutions. These systems can also be fairly inexpensive to operate and require no water. Because they use existing technology that has been modified just slightly, it is easy to find service technicians. It is a proven cooling method with low estimated PUE (1.25–1.35), not quite as much as some modern CRACs that yield 1.60–1.80 PUE, but still substantial. These systems offer distributed control of mode changes. In traditional facilities, switching from chillers to coolers typically happens using one master control. A typical DX CRAC installation will have 10-12 units (or even up to 30) that will self-determine the cooling situation and individually select the appropriate operating mode. Distributed control is less likely to cause a critical cooling problem even if one or several units fail. Additionally these units do not use any outside air. They recirculate inside air, thus avoiding any outside air issues like pollution and humidity.

The purchase of DX CRAC units with dry coolers does require more CapEx investment, a 50–100% premium over traditional CRACs.Other cooling technologies may offer higher energy efficiency. Additional space is required for the liquid pumping units, typically on the roof or beside the data center.

Multiple data centers that use this technology have achieved Tier III Certification. From a Tier standpoint, these CRACs are the same as the typical CRAC. In particular, the distributed control supports Tier III requirements, including Concurrent Maintainability. The use of DX CRAC systems needs to be considered early in the building design process. For example, the need to pump refrigerant limits the number of building stories. With a CRAC in the computer room and condenser units on the roof, two stories seem to be the building height limit at this time. The suitability of this solution for Tier IV facilities is still undetermined. The local control mechanism is an important step to Fault Tolerance, and Compartmentalization of refrigerant and power must be considered.

OPERATIONS CONSIDERATIONS WITH ECONOMIZERS

Economizer solutions present a number of operational ramifications, including short- and long-term impacts, risk, CapEx, commissioning, and ongoing operating costs. An efficiency gain is one obvious impact; although, an economizer can increase some operational maintenance expenses:

• Several types require water filtration and/or water treatment

• Select systems require additional outside air filtration

• Water-side economizing can require additional cooling tower maintenance

Unfortunately in certain applications, economization may not be a sustainable practice overall, from either a cost or “green” perspective, even though it reduces energy use. For example, high water use is not an ideal solution in dry or water-limited climates. Additionally, extreme use of materials such as filters and chemicals for water treatment can increase costs and also reduce the sustainability of some economizer solutions.

CONCLUSION

Uptime Institute experience has amply shown that, with careful evaluation, planning, and implementation, economizers can be effective at reducing energy use and costs and lowering energy consumption without sacrificing performance, availability, or Tier objectives. Even so, modern data centers have begun to see diminishing PUE returns overall, with many data centers experiencing a leveling off after initial gains. These and all facilities can find it valuable to consider whether investing in mechanical efficiency or broader IT efficiency measures such as server utilization and decommissioning will yield the most significant gains and greater holistic efficiencies.

Economizer solutions can introduce additional risks into the data center, where changes in operating modes increase the risk of equipment failure or operator error. These multi-modal systems are inherently more complex and have more components than traditional cooling solutions. In the event of a failure, operators must know how to manually isolate the equipment or transition modes to ensure critical cooling is maintained.

Any economizer solution must fit both the uptime requirement and business objective, especially if it uses newer technologies or was not originally designed for mission critical facilities. Equally important is ensuring that system selection and installation takes Tier requirements into consideration.

Many data centers with economizers have attained Tier Certification; however, in the majority of facilities, Uptime Institute consultants discovered flaws in the operational sequences or system installation during site inspections that were defeating Tier objectives. In all cases so far, the issues were correctible, but extra diligence is required.

Many economizer solutions are newer technologies, or new applications of existing technology outside of their original intended environment; therefore, careful attention should be paid to elements such as control systems to ensure compatibility with mission critical data center operation. Single shared control systems or mechanical system control components are a problem. A single controller, workstation, or weather sensor may fault or require removal from service for maintenance/upgrade over the lifespan of a data center. Neither the occurrence of a component fault nor taking a component offline for maintenance should impact critical cooling. These factors are particularly important when evaluating the impact of economizers on a facility’s Tier objective.

Despite the drawbacks and challenges of properly implementing and managing economizers, their increased use represents a trend for data center operational and ecological sustainability. For successful economizer implementation, designers and owners need to consider the overarching design objectives and data center objectives to ensure those are not compromised in pursuit of efficiency.

ECONOMIZER SUCCESS STORY

Digital Realty’s Profile Park facility in Ireland implemented compressor-less cooling by employing an indirect evaporative economizer, using technology adapted from commercial applications. The system is a success, but it took some careful consideration, adaptation, and fine-tuning to optimize the technology for a Tier III mission critical data center.

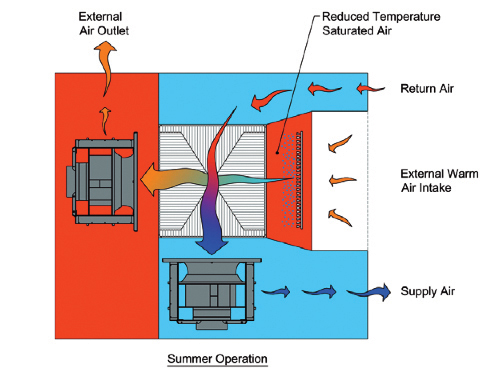

Figure 7. The unit operates as a scavenger air system (red area at left) taking the external air and running it across a media. That scavenger air is part of the evaporative process, with the air used to cool the media directly or cool the return air. This image shows summer operation where warm outside air is cooled by the addition of moisture. In winter, outside air cools the return air.

Achieving the desired energy savings first required attention to water (storage, treatment, and consumption). The water storage needs were significant—approximately 60,000 liters for 3.8-megawatts (MW), equivalent to about 50,000 gallons. Water treatment and filtration are critical in this type of system and was a significant challenge. The facility implemented very fine filtration at a particulate size of 1 micron (which is 10 times stricter than would typically be required for potable water). This type of indirect air system eliminates the need for chiller units but does require significant water pressure.

To achieve Tier III Certification, the system also had to be Concurrently Maintainable. Valves between the units and a loop format with many valves separating units, similar to what would be used with a chilled water system, helped the system meet the Concurrent Maintainability requirement. Two values in series are located between each unit on a bi-directional water loop (see Figure 7 and 8).

As with any installation that makes use of new technology, the facility required additional testing and operations sequence modification for a mission critical Tier III setting. For example, initially the units were overconsuming power, not responding to a loss of power as expected, and were draining all of the water when power was lost. After adjustments, the system performance was corrected.

Figure 8 (a and b). The system requires roughly twice the fan energy needed for a lot of typical rooftop units or CRACs but does not use a compressor refrigeration unit, which does reduce some of the energy use. Additionally, the fans themselves are high efficiency with optimized motors. Thus, while the facility has approximately twice the number of fans and twice the airflow, it can run many more of the small units more efficiently.

Ultimately, this facility with its indirect cooling system was Tier III Certified, proving that it is possible to sustain mechanical cooling year-round without compressors. Digital Realty experienced a significant reduction in PUE with this solution, improving from 1.60 with chilled water to 1.15. With this anticipated annualized PUE reduction, the solution is expected to result in approximately €643,000 (US$711,000) in savings per year. Digital Realty was recognized with an Uptime Institute Brill Award for Efficient IT in 2014.

CHOOSING THE RIGHT ECONOMIZER SOLUTION FOR YOUR FACILITY

Organizations that are considering implementing economizers—whether retrofitting an existing facility or building a new one—have to look at a range of criteria. The specifications of any one facility need to be explored with mechanical, electrical, plumbing (MEP), and other vendors, but key factors to consider are:

Geographical area/climate: This is perhaps the most important factor in determining which economizer technologies are viable options for a facility. For example, direct outside air can be a very effective solution in northern locations that have an extended cold winter, and select industrial environments can preclude the use of outside air because of high pollutant content, other solutions will work better in tropical climates versus arid regions where water-side solutions are less appropriate.

New build or retrofit: Retrofitting an existing facility can eliminate available economizer options, usually due to space considerations but also because systems such as direct air plus evaporative and DX CRAC need to be incorporated at the design stage as part of the building envelope.

Supplier history: Beware of suppliers from other industries entering the data center space. Limited experience with mission critical functionality including utility loss restarts, control architecture, power consumption, and water consumption can mean systems need to be substantially modified to conform to 24 x 7 data center operating objectives. New suppliers are entering into the data center market, but consider which of them will be around for the long term before entering into any agreements to ensure parts supplies and skilled service capabilities will be available to maintain the system throughout its life cycle.

Financial considerations: Economizers have both CapEx and operating expense (OpEx) impact. Whether an organization wants to invest capital up front or focus on long-term operating budgets depends on the business objectives.

Some general CapEx/OpEx factors to keep in mind include:

• Select newer cooling technology systems are high cost, and thus require more up front CapEx.

• A low initial capital outlay with higher OpEx may be justified in some settings.

• Enterprise owners/operators should consider insertion of economizers into the capital project budget with long-term savings justifications.

ROI objectives: As an organization, what payback horizon is needed for significant PUE reduction? Is it one-two years, five years, or ten? The assumptions for the performance of economizer systems should utilize real-world savings, as expectations for annual hours of use and performance should be reduced from the best-case scenarios provided by suppliers. A simple payback model should be less than three to five years from the energy savings.

Depending on an organization’s status and location, it may be possible to utilize sustainability or alternate funding. When it comes to economizers, geography/climate, and ROI are typically the most significant decision factors. Uptime Institute’s FORCSS model can aid in evaluating the various economizer technology and deployment options, balancing Financial, Opportunity, Risk, Compliance, Sustainability, and Service Quality considerations (see more about FORCSS at https://journal.uptimeinstitute.com/introducing-uptime-institutes-forcss-system/).

Keith Klesner is Uptime Institute’s Vice President of Strategic Accounts. Mr. Klesner’s career in critical facilities spans 16 years and includes responsibilities ranging from planning, engineering, design, and construction to start-up and ongoing operation of data centers and mission critical facilities. He has a B.S. in Civil Engineering from the University of Colorado-Boulder and a MBA from the University of LaVerne. He maintains status as a professional engineer (PE) in Colorado and is a LEED Accredited Professional.